Chapter 01

Designing Interfaces

As I noted in the book’s overview, this is a work in progress and I’m sharing it here in draft form. The overall book isn’t finished, but I hope the chapters I’m publishing have some value as I finalise work on the book.

In this chapter I’ll explore how we arrived at where we are today. I’ll provide a brief history of user interface design – drawing from principles of human-computer interaction (HCI) – so that the lessons we’ve learned from the history of UI design aren’t lost.

I’ll also stress the importance of understanding metaphors and mental models, which underpin UI design. Lastly, I’ll detail a number of current user interface design systems, including: Apple’s Human Interface Guidelines; Google’s Material Design and IBM’s Living Language.

Table of Contents

Overview

I’m a firm believer in drawing my teaching materials from the real world. As an Associate Senior Lecturer at Belfast School of Art (teaching half-time) I’m fortunate to divide my time between:

- real world design projects for a wide range of clients, both large (EA) and small (Niice); and

- teaching students on my undergraduate Interaction Design programme.

Everything in this book (and its accompanying materials) has been tried and tested. I’ve drawn the examples from research I’ve undertaken, client projects I’ve worked on and – where appropriate – samples of my students’ work.

I’ve included my students’ work, because I’m proud of the work they’re doing and – equally importantly – these are relatively young students and they’ve come a long way with their User Interface (UI) design in a relatively short space of time. I hope their work inspires you to do similarly impressive work.

In this first chapter I’ll set the scene, providing a little history and context. If you’re up-to-speed on the history of user interface design, and have an understanding of the importance of metaphors and mental models, you might wish to fast-forward to Chapter 2: The Building Blocks of Interfaces where I get into hands-on practicalities.

UI design stretches back to the early GUIs of the 70s and the emergence of computers as devices that were available beyond the confines of universities and big businesses.

The rise of the personal computer – a revolutionary idea in its time – brought with it the need for a way to interact with computers that was as easy and friendly as possible.

This intense period in computing history saw the emergence of the field of Human-Computer Interaction (HCI), focused on the relationship between the design of computer technology and the interaction between humans (users) and computers.

Section 1: The ‘UI’ in ‘GUI’

Before I dive into the depths of user interfaces (UIs), I think it’s important to get some definitions out of the way. The term ‘UI’ we know and love today has its origins in the 70s term ‘GUI’, an abbreviation of graphical user interface.

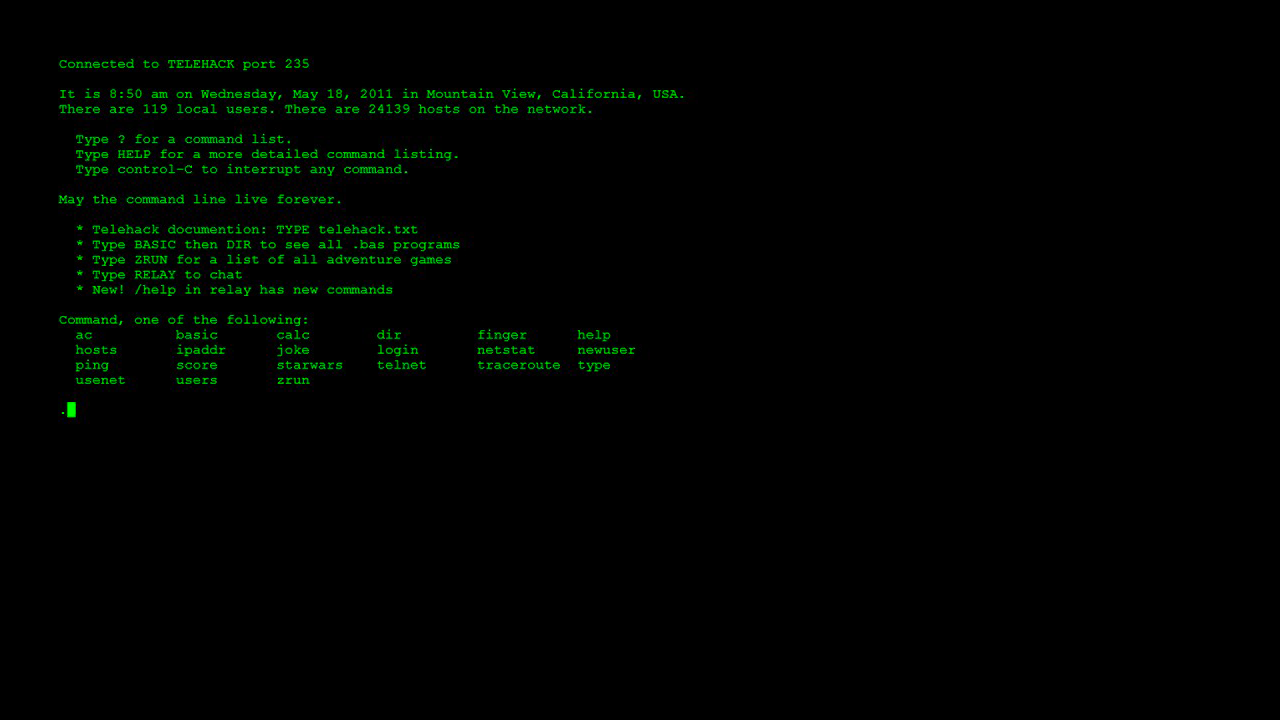

UIs might feel commonplace to us today, but when they were first imagined they represented a fundamental shift in how we interacted with computers. Before the emergence of GUIs, working with a computer involved typing arcane commands into it via a text-based terminal from a command-line interface (CLI), a little like the following command that produced a printable calendar:

calendar

To work with a computer a user first needed to ‘learn its language’, which was no small task. As Eric S Raymond puts it in ‘The Art of Unix Usability’ (a wonderful and lightly styled HTML website):

These command-line interfaces placed a relatively heavy mnemonic load on the user, requiring a serious investment of effort and learning time to master.

The emergence of graphical user interfaces allowed for a much friendlier (and easier to learn) way of interacting with computers. This shift from text-based interaction to graphical interaction changed everything.

In 1973, Xerox PARC developed the Alto personal computer, the first computer imagined from its inception to support an operating system that was based on a graphical user interface. Remarkably, the Alto was first introduced on 1 March, 1973, a full decade before mass market GUI computers became available.

The Alto’s interface – which used windows, icons and menus and allowed the user to open, move and delete files – embraced metaphor and a graphical approach. This is, of course, commonplace here and now, but it caught the eye of Steve Jobs who, rightly, saw this approach as the future of computing.

One of the best known early GUIs was Apple’s pre-OS X operating system. System 7 (which was the first graphical user interface I started using many, many years ago!). System 7 (and, before it, Apple’s Lisa OS) was considerably easier to navigate than issuing commands via a keyboard.

This graphical interface coupled with the use of a mouse changed computing forever. Users could directly interact with the objects within an interface manipulating them directly.

The emergence of the very first iPhone in 2007 took this a small, but important step further. Instead of using a mouse, on your iPhone you were using your fingers to directly manipulate the interface.

This shift away from the abstraction, one-step-removed, of a mouse towards physically interacting with objects on a screen underlies our current context. In a desktop environment we still use mice, but in an increasingly mobile environment we find ourselves interacting directly with the UI, physically touching objects on a screen.

Understanding this history might seem like a distraction, but spending a little time familiarising yourself with it helps you understand timeless principles, still relevant today, like:

- Information Architecture

- Human-Computer Interaction

- The Use of Metaphor in UI

- Mental Models

- …

Of course user interfaces have come a long, long way since the early 70s and we’re now witnessing the rise of non-graphical user interfaces, particularly conversational and voice interfaces. In this book, I’ll focus primarily on graphical user interfaces across a range of contexts:

- Desktop (Mouse)

- Tablet → Smartphone (Finger)

- Wrist (Finger)

If conversational and voice interfaces interest you, have no fear, I have you covered. I’ll explore where interfaces are heading in Chapter 9: Where to from here for UI?. So I’ll be covering all of the bases.

Section 2: Designing Human Interfaces

The trouble with the term ‘user interface’ is that it abstracts humans (messy, unpredictable and… human!) into an anonymous category of ‘users’. In fact these users are all different, above all, they’re all humans.

I’m not going to reinvent the wheel and call this book Building Beautiful HIs (‘Building Beautiful Human Interfaces’), but as you read it – and as you design – put some thought into the different humans that will use the designs you create. Consider:

- Age: Young and Old

- Gender: Male and Female (And everything in between or outside these terms.)

- Geographical Context: Where in the world these users are.

- …

It’s critical to consider the above mix so that the UIs we design cater to as wide an audience as possible. Designing for accessibility and inclusivity is important in our increasingly diverse global culture. So as we design, let’s design responsibly and factor this in.

It’s no surprise to me that Apple chose to name its excellent Human Interface Guidelines (HIG) Human Interface Guidelines, not User Interface Guidelines. By embracing the term ‘human’, Apple acknowledges that at the receiving end of every interface lies a human, and that human wants to get something done.

When you’re designing a UI, bear in mind that the user using your interface will very likely be dealing with everyday distractions. As such, it’s important to ensure your UI is easy to understand and distraction free.

Humans are trying to achieve tasks – perhaps to book a flight, or to buy a book – while juggling other human tasks, for example, feeding a baby. Understanding this is critical. The best user interfaces get out of the way and help you to get things done with the minimum of fuss.

By focusing on first principles you’ll improve your UI by:

- getting out of the user’s way;

- focusing on maximum functionality; and

- helping your users (preferably minimising opportunities for error and – when things go wrong, as they inevitably do – helping them to recover from those errors).

Few, if any, users will come to your interface without prior knowledge (of other interfaces), as such, it’s important to understand the ‘received knowledge’ that your users bring to your interface.

We all use interfaces day in, day out and – as we use them – we learn conventions, standardised approaches towards particular problems. Before we get into the fundamentals of designing UIs, it’s worth mentioning two important concepts:

- Metaphors; and

- Mental Models

I’ll explore these in the next two sections. Unless you’re deeply aware of these principles, I’d strongly recommend you resist the urge to fast-forward to Chapter 2. Every interface you design will benefit from an understanding of metaphors and mental models so let’s dive in and explore them.

Section 3: Desktops and Metaphors

If you’ve ever bought anything from Amazon, you’ll have encountered metaphors. When you add something to your basket at Amazon, there is in fact no ‘basket’. The basket is just a metaphor – drawn from the real world – to help you understand where you store your items.

As you check out and pay for your items, there is (thankfully) no check out line. You simply pay with your credit card and, magically, your purchases are on their way. This is metaphor in action.

By using everyday models from the ‘real’ world, we can design easily understandable UIs that need very little in the way of explanation.

Understanding the existing use of metaphors is important. If a convention exists – a shopping basket, for example – it’s best to stick with this metaphor and resist the urge to invent a new one.

When users navigate a UI that is new to them, they do so with the received knowledge of every other UI they have ever used. Even if your UI is for a new product that’s breaking new ground, there will be conventions you can draw upon.

Returning to our checkout metaphor, if your product features payments of any kind, your UI will benefit from following pre-existing checkout conventions. Users might:

- add items to their ‘basket’;

- continue browsing the ‘store’, before returning to their basket; and

- finally, ‘checking out’.

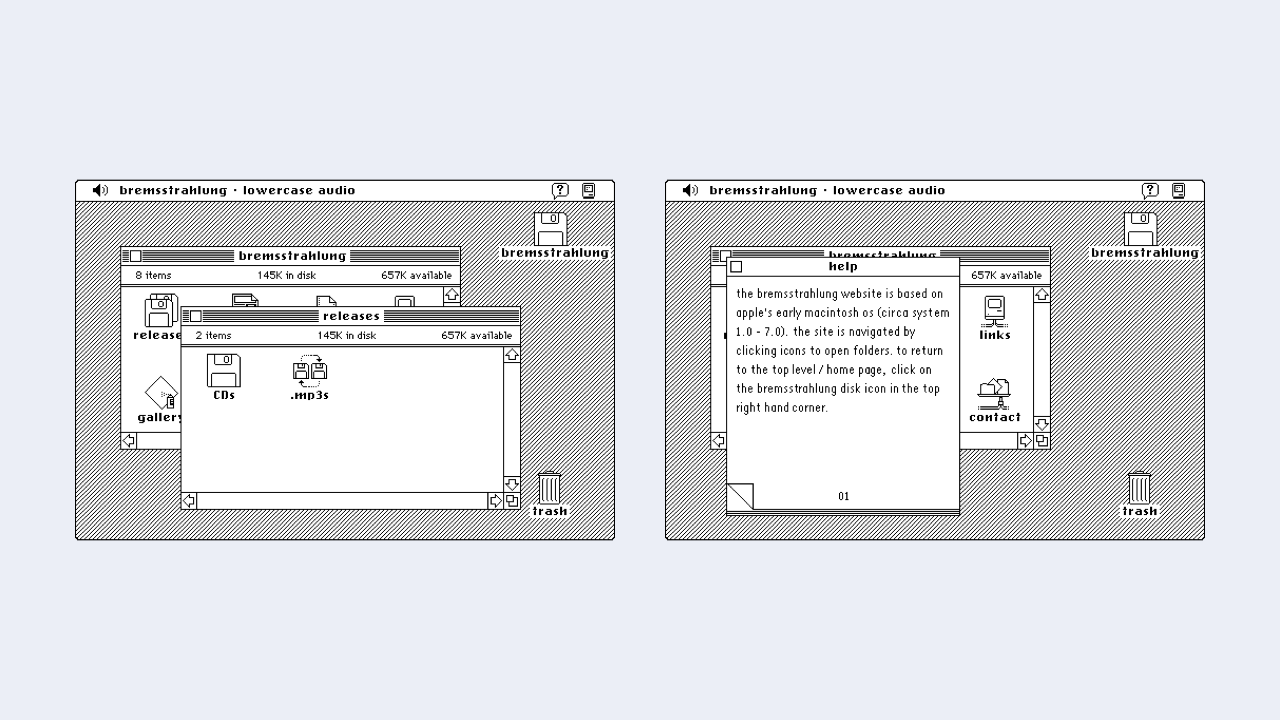

When Apple launched its System 7 (and earlier) GUIs it used metaphors extensively. By using a ‘desktop metaphor’, users could understand and build a mental model of how everything worked.

When a user stored a ‘document’ in a ‘folder’, they understood that there was no physical, paper document per se, or even a folder. They understood that these were simply metaphors to help explain what was happening under the hood in an easy to understand manner.

Similarly, when users discarded an item in the ‘trash’ and later ‘emptied the trash’, no trash lorry arrived at their house.

This foundational use of metaphor stretches so far back that it has informed almost every operating system since it was conceived and has mapped over from a desktop context to a mobile context.

By using an ‘iconic’ approach, we can summarise concepts in an easy to follow manner. For example, we might use:

- A ‘cog’ icon that users understand represents ‘settings’.

- An ‘envelope’ icon that represents ‘email’.

- Or a ‘folder’ icon that represents ‘a place to organise files’.

Designers have co-opted ‘real world’ objects to represent digital objects. When we click on a cog, we know that the settings we’re about to modify aren’t made up of actual cogs, this is just an idea.

You can improve your UI design by embracing the use of metaphor. Do so and your users will understand – based on their previous experience – how things work.

As you use desktop, tablet and mobile applications, take note of the different conventions that exist. You’ll find that a great deal of groundwork has been covered by others and, if you stick to pre-existing conventions, you’ll ensure that your user interfaces tap into this collective understanding.

Section 4: Establishing Clear Mental Models

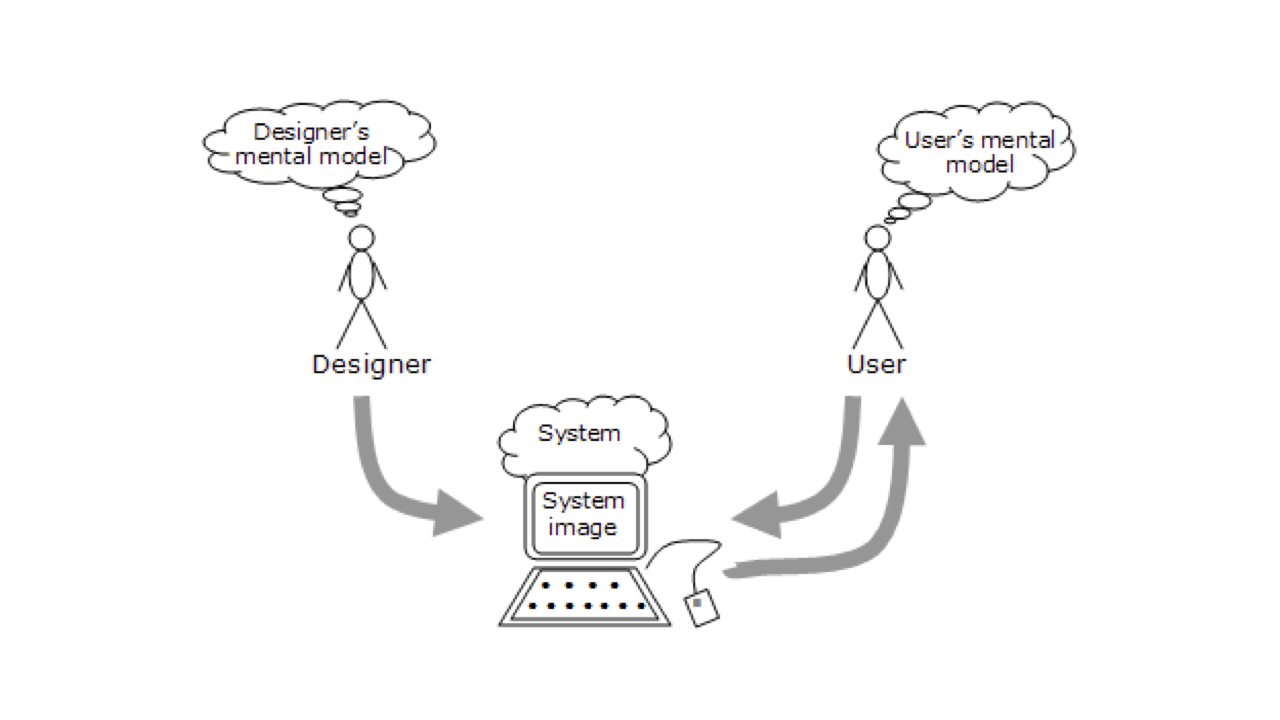

A mental model is an explanation of an individual’s imagined thought process about how something works in the real world.

The idea that users rely on mental models was first proposed by the Scottish psychologist Kenneth Craik in 1943. Craik suggested that the mind constructs ‘small-scale models’ of reality that it can use to anticipate events.

In 1971, Jay Wright Forrester – a pioneering computer engineer and systems scientist – developed Craik’s thinking, defining mental models as follows:

The image of the world around us, which we carry in our head, is just a model. Nobody in his head imagines all the world… [They] have only selected concepts, and relationships between them, and [they] use those to represent the real system.

Whatever the interfaces we design, it’s highly likely that our users will have experienced other interfaces that will have established a ‘mental model’ in their mind of how things work. As Jakob Nielsen puts it:

What users believe they know about a user interface strongly impacts how they use it. Mismatched mental models are common, especially with designs that try something new. […] Mental models are one of the most important concepts in human-computer interaction.

Users have mental models based upon their past experiences and it’s important that we take these mental models on board when considering our user interface design. A mental model is what the user believes about the system they are using. Put simply:

- Mental models are based upon beliefs, not facts: that is they are mental models of what users know – or think they know – about the interface that you’re designing.

- Individual users have different mental models based upon their own unique past experiences.

One of the biggest problems we run into when designing user interfaces is the conflict between what we – as the designers of the interface – know about the underlying system and how it works, and how users expect things to work.

When considering your UI design, it helps to consider how your interface relates to others’ interfaces. In short: Users expect sites and applications to act and work alike. Introducing new models can lead to confusion on the part of the user, resulting in an interface that doesn’t work.

You – as the designer – might know how the interface works (because you created it), but the user is confused (because they have a different understanding of the interface you’ve designed, based on their past experiences with other interfaces).

Regardless of what we’re designing, it’s important to embrace users’ mental models and marry these with fundamental principles of design to ensure that the interfaces we build are easily understandable.

I’ll explore this, stressing the need for a clear overall concept and a strong visual hierarchy – ensuring complex information is easy to understand and navigate through – in Chapter 3: Information Architecture, when I explore how we map out user interfaces at a high level.

Section 5: UI, Here and Now

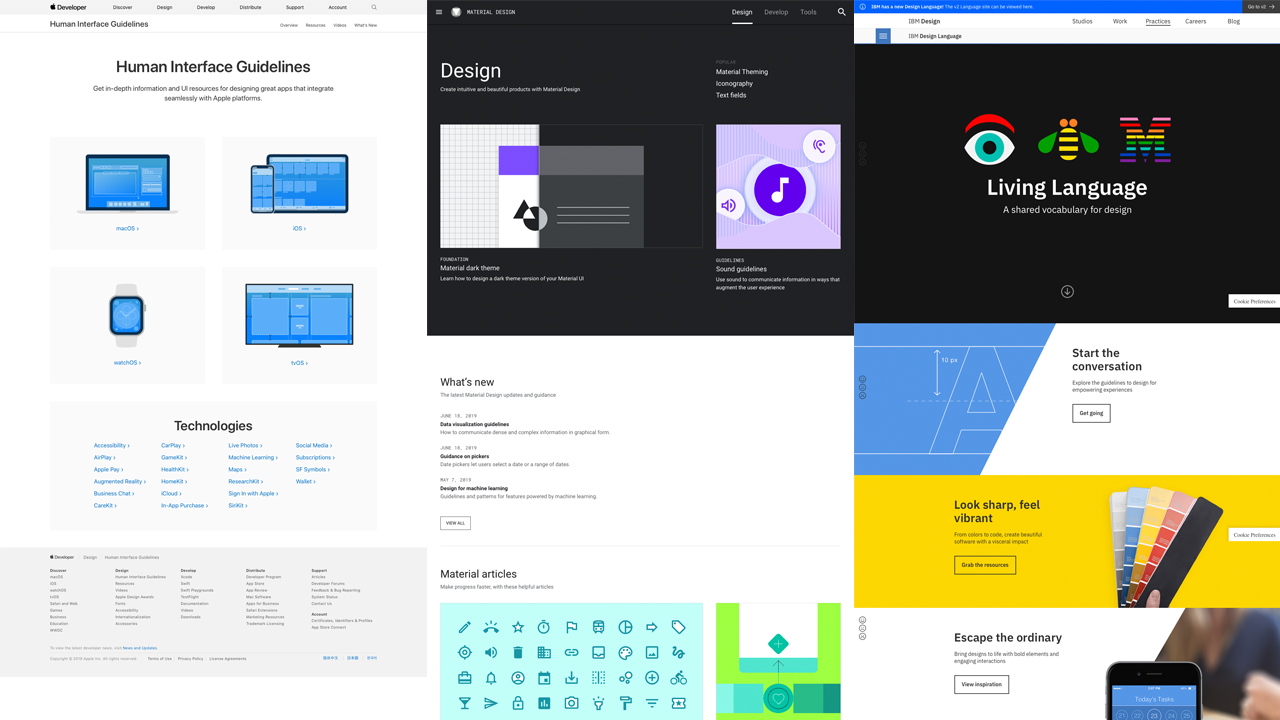

Fortunately there are a wealth of resources available to us that can inform our understanding of UI design. Apple’s Human Interface Guidelines, Google’s Material Design, and IBM’s Living Language all offer lessons we can learn from, regardless of the devices we’re designing for.

These resources provide a useful overview of designing interfaces at a high level and are well worth exploring before we get into analysing how interfaces are built in Chapter 2.

As I noted earlier, I think the fact that Apple provides human interface guidelines – and not user interface guidelines – is an important distinction. We’re seeing a return to terms like human-centred design (as opposed to, say, user experience design) and Apple’s Human Interface Guidelines naming is prescient.

Apple is treading a bit of a tightrope, clearly they don’t want to lose sight of their heritage (and their first set of groundbreaking Human Interface Guidelines), but – as you’ll see in the copy on their page – they use both terms: human interface and user interface.

Developed in 2014, Google’s Material Design expanded on the ‘card’ motifs that debuted in Google Now. Material Design uses more grid-based layouts, responsive animations and transitions, padding, and depth effects such as lighting and shadows.

IBM’s Living Language – designed to be a shared vocabulary for design – represents IBM’s move into a design-driven culture and is well worth exploring. Currently on v2, IBM are also maintaining an archive of past versions, which offer a great deal of practical advice for designing user interfaces.

As IBM put it in their Living Language: “Focus on users’ goals. Design tools that lead people to actionable insights. Be proactive, always anticipating users’ next moves and helping them prepare for change.”

Lastly, styleguides.io is a very useful roundup of other approaches to developing user interface libraries and style guides. It’s worth bookmarking and offers an insight into how a wide range of companies, both large and small, are moving towards the development of visually consistent user interface libraries.

As of 2019, styleguides.io has gathered 500+ different resources and it’s worth exploring to get a feel for how other companies – that are smaller in scale to Apple, Google and IBM – are approaching the question of creating consistent user interface guidelines.

Closing Thoughts

All being well, this chapter has introduced you to some important aspects of computing history, paving the way to where we’ve reached today.

In the next chapter – Chapter 2: The Building Blocks of Interfaces – I’ll introduce the fundamental building blocks that interfaces are made from: Objects, Elements, Components, Pages and Flows, so that you can start to build your own user interface designs. 🎉

Further Reading

There are many great publications, offline and online, that will help further underpin your understanding of user interface design. I’ve included a few below to start you on your journey.

- I’d strongly suggest starting by exploring Apple’s Human Interface Guidelines, Google’s Material Design resources, and IBM’s Living Language (Version 1 and Version 2). These provide a useful overview of designing interfaces at a high level and offer an insight into the design vocabularies of different platforms.

- The Interaction Design Foundation have a useful overview of UI design titled What is User Interface (UI) Design? It’s well worth a read for a high level introduction to user interface design.

- Lastly, Nielsen Norman Group have a comprehensive overview on Mental Models that is well worth reading. Additionally, they have a wealth of additional articles and videos on the topic, that are worth exploring.

Christopher Murphy

@fehlerA designer, writer and speaker based in Belfast, Christopher mentors purpose-driven businesses, helping them to launch and thrive. He encourages small businesses to think big and he enables big businesses to think small.

As a design strategist he has worked with companies, large and small, to help drive innovation, drawing on his 25+ years of experience working with clients including: Adobe, EA and the BBC.

The author of numerous books, he is currently hard at work on his eighth, ‘Designing Delightful Experiences’, for Smashing Magazine and ninth, ‘Building Beautiful UIs’, for Adobe. Both are accompanied by a wealth of digital resources, and are drawn from Christopher’s 15+ years of experience as a design educator.

I hope you find this resource useful. I’m also currently working on a book for the fine folks at Smashing Magazine – ‘Designing Delightful Experiences’ – which focuses on the user experience design process from start to finish. It will be published in late 2019.

You might like to follow me on Twitter for updates on this book, that book and other projects I’m working on.